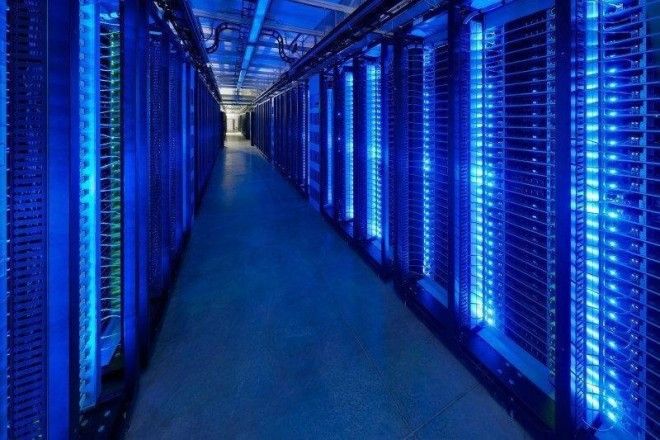

Much of that "magic" happens in the social network's own massive data centers.

Since 2011, Facebook has built centers in Oregon, Iowa, North Carolina, and Sweden with innovative, environmentally conscious designs that it has made available to the public through its Open Compute Project.

In July, it began constructing a fifth in Fort Worth, Texas.

Take a peek inside those centers and see where all your Facebook data "lives."

From the outside, Facebook's data centers look like massive warehouses. Here's an aerial view of the 300,000-square-foot North Carolina location:

But the building isn't all high-tech servers. Here's where employees sit:

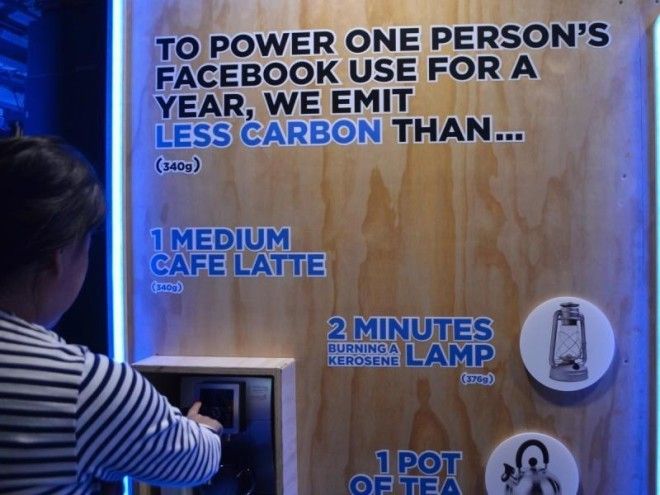

Thanks to its proprietary designs, Facebook has saved itself $2 billion in infrastructure costs since 2011.

When you look at one of Facebook's old server racks next to its new ones, the physical differences are immediately apparent.

Facebook has custom-designed its servers and power supplies, including its uninterruptible power sources, or UPS, which provide emergency power if the main power fails. This is the inside of the company's data center in Lulea, Sweden.

Here's a closer look at one of its open racks. The rack gets integrated into the center infrastructure. Facebook's "grid to gates" philosophy dictates the interdependence of "everything from the power grid to the gates in the chips on each motherboard."

Facebook's goal is to make its centers as efficient as possible, which includes paying attention to little details, like eschewing the common practicing of putting plastic bezels in front of its servers. Removing the plastic allows the servers to draw in more air.

Not that "efficient" means small. Its first Prineville, Oregon, data center alone has 950 miles' worth of wires and cables inside (that's roughly the distance between Boston and Indianapolis).

Here's the outside of Facebook's custom data center in Prineville, Oregon. It uses 38% less energy than Facebook's previous facilities while costing 24% less.

That is great for Facebook, but also the planet.

Because of the way servers are connected, technicians can easily find, remove, and fix failed components.

Technicians can zoom around the part of the data center they're responsible for using a portable diagnostic station.

As its servers whir away, Facebook needs to prevent them from overheating. In its Swedish center, Facebook sucks air in from outside to cool its tens of thousands of servers.

Cool air also flows through a series of air filters and a "misting chamber," where a fine spray helps to further control the temperature and humidity.

Air hitting the severs can't be too cold, either. Here is a "mixing room" where cold outdoor air combines with server exhaust heat to regulate the temperature.

Facebook calls the area where all this happens the "cooling penthouse." The top half of each facility manages all the cooling, which takes advantage of the fact that cold air falls and hot air rises.

So next time you're on Facebook, try to visualize all your interactions passing through these data centers, where Facebook's servers process more than 10 *trillion* queries a day.